SaaSpocalypse How, Redux

Due Diligence for the Service-as-Software Era

I. The Mispricing

The market repriced every SaaS incumbent on the fear that AI would eat their moats — then never applied the same scrutiny to the AI companies themselves. Their gross margins average 45% — closer to managed services than to software — yet they carry 25–30x revenue multiples. Exact figures vary by cohort and methodology; the magnitude of mismatch between margin structure and valuation multiple does not. The full economic case is in The Mirage of AI ROI.

The reason this persists is that the market treats “AI” as a valuation category. It is a delivery mechanism. Technology valuations have organized into three tiers for decades: Services at 0.3–3.0x revenue. SaaS at 3–8x median. Platform & Infrastructure at 10–25x — AWS, Nvidia, Snowflake, CrowdStrike, Palo Alto, Cloudflare, Datadog — companies with ~70% gross margins, multi-billion-dollar revenue, and platform gravity that deepens with usage. The boundaries shift with market conditions but the ordering never inverts.

Even if the market temporarily creates a fourth tier, underwriting still requires proof of sublinear verification cost and controllable supplier economics. There are services companies that use AI, software companies that use AI, and infrastructure companies that build AI. Anthropic, Databricks, and Palantir belong in Tier 3 — they build or operate foundational infrastructure, control their own platform economics, and serve as layers other companies build on. The application-layer companies raising at 25–30x — Harvey, Sierra, Glean, Dialpad — sit on top of that infrastructure, consuming someone else’s API, layering on verification, and selling outcomes in categories already priced as services. The Finro AI agent dataset (210 companies, 11 niches) already shows the market sorting within AI — HR, PropTech, Sales agents trade at 3–12x, overlapping SaaS. It just hasn’t extended that logic across categories to recognize that a Tier 1 AI company is the same asset class as an IT services firm with a different pitch deck.

The counterargument: AI commoditizes cognition like PCs commoditized computing, demand expands as costs collapse, and the winners will be those who own data, compute, energy, and verification. That framing has saturated investor discourse since the February sell-off. It is also a Tier 3 thesis. The value accrues to the infrastructure layer — not to the application-layer firms reselling it per outcome. If AI commoditizes cognition, the company selling commoditized cognition is on the wrong side of its own disruption thesis.

Getting the tier wrong is a 70–97% valuation swing. Technical due diligence is where the category claim should get falsified — and almost never is. TDD frameworks were built for deterministic software, and no one has updated the methodology to falsify a category claim that didn't exist five years ago. The narrative case has been made. What follows are the questions the current playbook doesn’t ask — four dimensions where the horseless carriage still gets inspected for hay consumption.

II. The Diagnostic

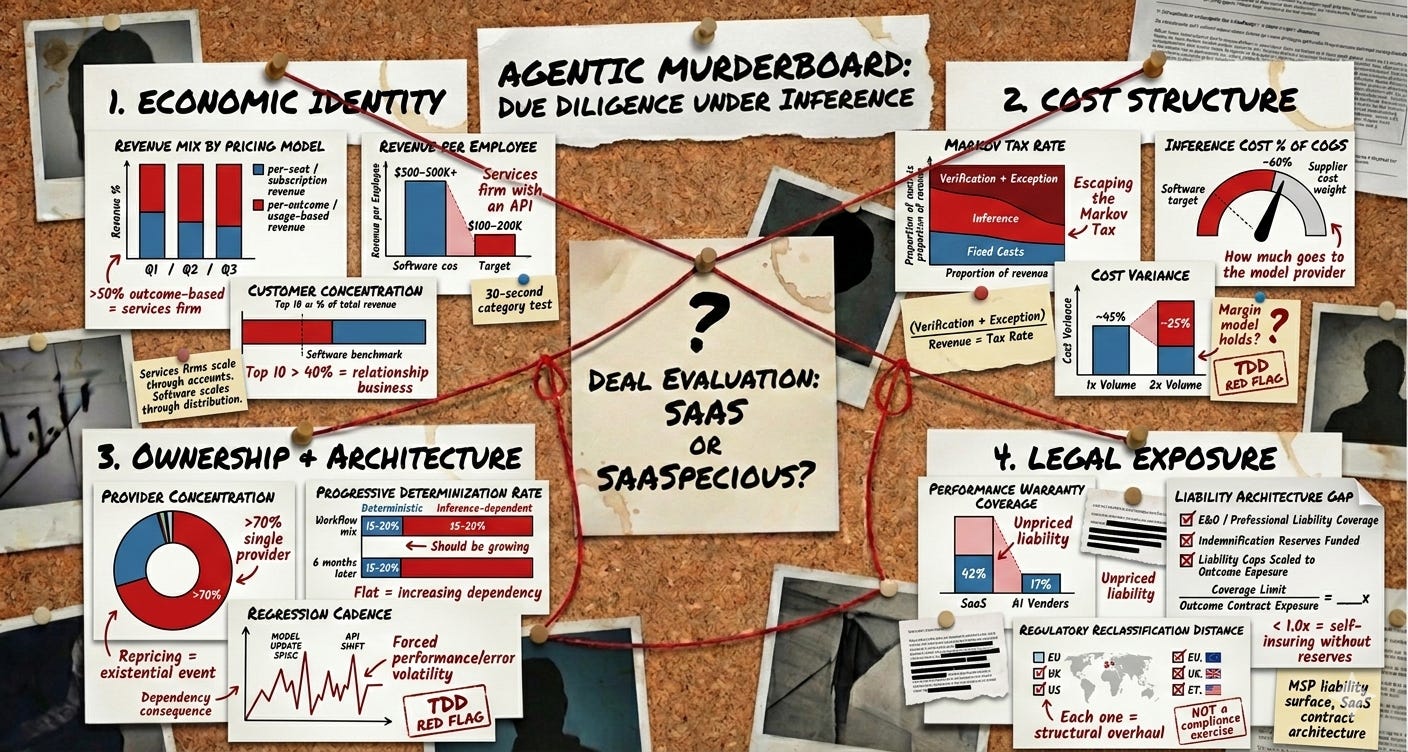

1. Economic Identity

No TDD methodology evaluates whether the target’s COGS structure, pricing model, and delivery risk map to software or services — despite a 3–6x difference in appropriate multiple. When a target charges per resolution or per document rather than per seat, diligence treats it as a go-to-market decision. It is a category signal — functionally indistinguishable from how services firms have priced for decades. The counterargument, that AI captures labor budgets rather than software budgets, assumes the conclusion: that the cost structure will eventually look like software. Whether it does is the empirical question Dimension 2 exists to answer.

2. Cost Structure

The Markov Tax (perpetual probabilistic validation cost) is the key variable, and TDD rarely demands evidence that it’s falling at scale. Heraclitus had it right: you cannot step into the same model twice. A successful prior run does not reduce the verification burden on the next one. If verification and exception-handling scale with throughput, margins converge toward services — the pitch-deck is not the territory. Upstream model updates compound this: researchers documented a single update shifting accuracy on a benchmark task from 97.6% to 2.4%. Version pinning is the organizational equivalent of unplugging the smoke detector — it buys silence while the technical debt compounds.

Benchmarkit’s 2025 survey (n=372) found only 15% of companies can forecast AI costs within ±10%. If the margin model collapses under volume doubling, the valuation is pricing a cost structure that does not yet exist. Diligence must demand verification minutes per unit, exception rate, inference cost as a percentage of COGS, and regression cadence under provider changes — all trending down.

3. Ownership and Architecture

TDD assesses proprietary code and IP but not dependency depth on rented intelligence. The evident failure mode is a long-familiar pattern: the vendor changes something upstream, and your control plane discovers it in production. The target typically lacks enforceable control over whether the foundation model provider ships its product as a feature, reprices API access, or withdraws the inference subsidies its unit economics depend on. The right question is what happens to margins if token costs double or triple — and whether the target has any contractual or architectural leverage over that scenario.

The escape route is Progressive Determinization: migrating validated workflows from probabilistic inference to deterministic execution, permanently eliminating the Markov Tax and supplier-induced drift on each workflow. No framework evaluates whether the target is doing this, or whether the architecture is getting less dependent over time.

4. Legal Exposure

When pricing shifts from per-seat to per-outcome, the claims surface expands — yet only 17% of AI vendor contracts include performance warranties versus 42% for traditional SaaS. The delta between customer expectations and contractual obligations creates a liability vacuum, universally abhorred. SaaS providers cap liability at subscription fees and warrant uptime, not outcomes. MSPs and BPOs, which do sell outcomes, carry professional liability coverage, E&O insurance, and indemnification structures built over decades of case law. The AI companies pricing per-resolution have inherited the liability surface of a services firm while operating under the contractual architecture of a SaaS vendor — the worst of both worlds from an exposure standpoint. Actuarial frameworks for probabilistic risk exist, but the longitudinal claims data for AI-native failure modes does not. Meanwhile, a target one regulatory reclassification away from “high-risk” may lack the governance infrastructure to operate under that classification — meaning reclassification forces a structural overhaul, not a compliance exercise.

The Agentic Murderboard. Twelve metrics across four dimensions — three per quadrant, each orthogonal, none substitutable. Economic Identity: revenue mix by pricing model, revenue per employee, customer concentration. Cost Structure: Markov Tax rate, inference cost as % of COGS, cost variance under volume doubling. Ownership & Architecture: provider concentration, progressive determinization rate, regression cadence under provider changes. Legal Exposure: performance warranty coverage, liability architecture gap, regulatory reclassification distance. Any metric moving the wrong direction breaks the software thesis.

The bull case is not fiction. Margins are improving, the best operators may reach the low 60s within two years — if verification costs decline with scale rather than tracking it. But we are pricing the option on determinization as if it has already happened.

The reclassification is latent, not inevitable — it needs a trigger: a deal that blows up on margin compression, a public company that misses on verification costs, a regulator that forces the category question. The window between recognizing the sorting criteria and the market pricing them is where the advantage lives.

Agents start as cogs. They end up as COGS.

Price accordingly.