Epimetheus's Agentic Bride

Part 3 of 3: A Manifesto for Bounding Pandora's Agency and Compiling Hype into Hope

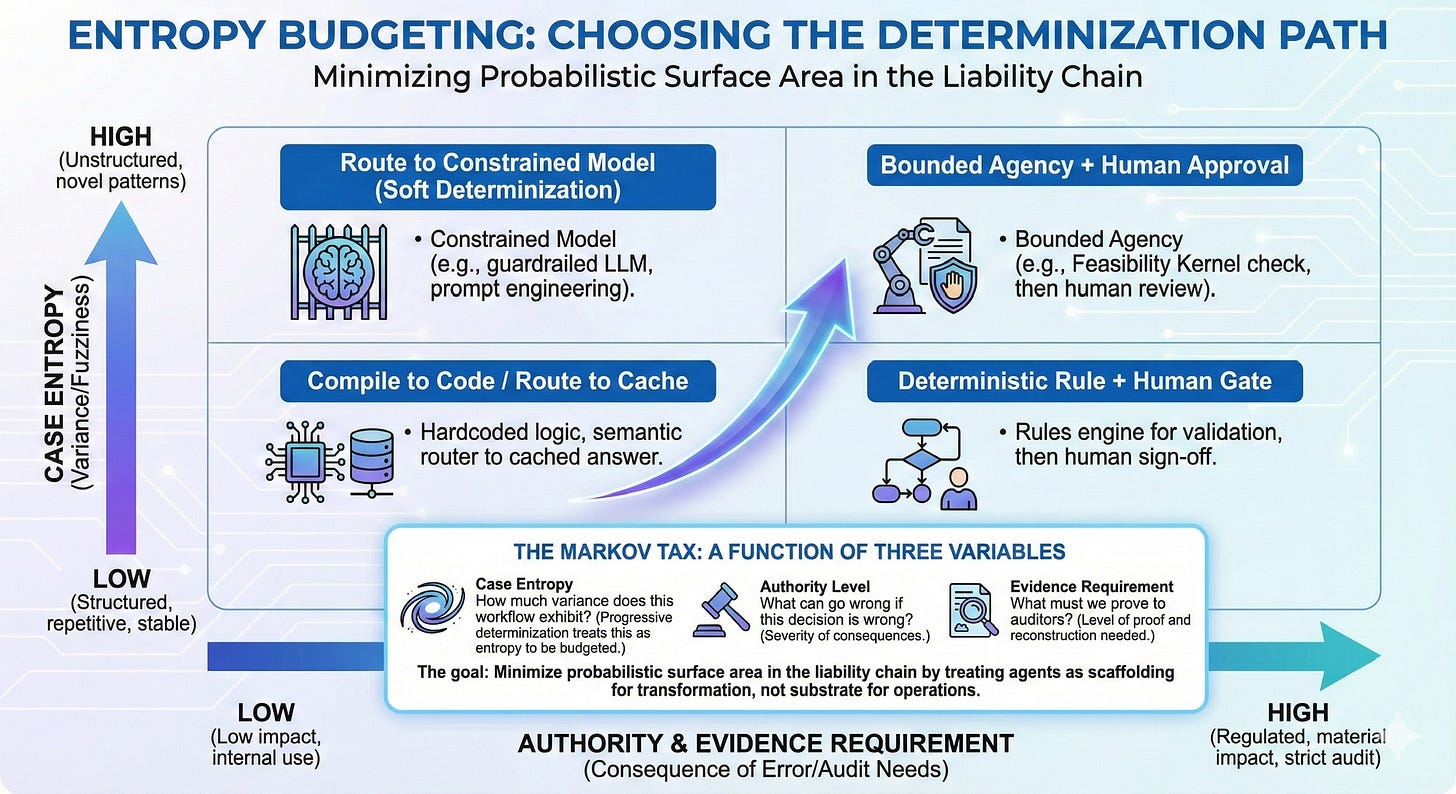

The Prescription: Use agentic AI to discover and prototype. Compile the stable fraction into deterministic systems. For the irreducible residue, impose Bounded Agency—confine the agent’s actions to a pre-verified feasible region so you verify quality, not safety. Graduate workflows from probabilistic experimentation to deterministic infrastructure.

The Mechanism: Progressive determinization—a disciplined lifecycle that treats agents as scaffolding for transformation, not substrate for operations.

The Test: Every agent deployment should answer one question: what does stable look like?

Part 2 diagnosed the structural asymmetry: generation costs deflate; verification costs don’t amortize. The Markov Tax inverts expected economics wherever errors have consequences.

This part offers the prescription.

Progressive Determinization as Stabilization Mechanism

In Part 1, the argument was that the post-deterministic firm is metastable—capable of thriving in bounded domains, but lacking the control-theoretic stability required for sustained operation as a general enterprise model. Progressive determinization is the stabilization mechanism the metastable firm requires: a disciplined lifecycle that converts probabilistic exploration into deterministic infrastructure, phase by phase, while imposing Bounded Agency on whatever remains irreducibly fuzzy.

It is also the faster path. The counterargument—that progressive determinization is a framework for organizational timidity—collapses against the data Part 2 documented: forty-two percent of companies abandoned most AI initiatives in 2025, up from 17% in 2024; Gartner projects over 40% of agentic AI projects will be canceled by 2027; roughly 95% of enterprise AI pilots fail to deliver measurable ROI. Moving fast without a stabilization strategy doesn’t produce speed. It produces expensive failure-and-restart cycles. Progressive determinization is faster than failure.

The alternative is what most enterprises are building: permanent probabilistic infrastructure with no path to stable unit economics. That’s not transformation. It’s dependency with a demo.

Why Now: The Forcing Functions

Two clocks are running. One is regulatory, one is economic. Neither cares about your roadmap.

The Regulatory Clock

EU AI Act obligations for high-risk AI systems take effect August 2026—though the Digital Omnibus proposal may delay certain provisions to December 2027. The SEC has charged multiple firms for “AI washing,” with enforcement actions escalating from Delphia/Global Predictions (March 2024, first-ever) to Nate Inc. ($42 million fraud with parallel DOJ criminal charges). The SEC doesn’t care what your model can do. It cares what you claimed it could do.

The liability standard is shifting from accuracy to evidence. “Our model is 99% accurate” is becoming “Show me the exact chain of reasoning and data points used to deny this claim on this date.” A system can be brilliant at forward reasoning—generating the answer—and impossible to defend backward—reconstructing the reasoning for audit. This is why pure end-to-end LLM systems fail in regulated contexts regardless of model capability.

SR 11-7 requires models documented so that “unfamiliar parties can understand the model’s operation.” Progressive determinization produces these artifacts inherently at each phase gate—not as retrofitted compliance theater. The stakes are not abstract: firms experience average cumulative abnormal stock returns of -21% following AI incidents—errors have balance-sheet consequences. A striking market signal: the Bank Policy Institute reported in 2025 that some banks have begun asking vendors to remove or turn off AI features in third-party products to avoid model risk management review. When the market voluntarily retreats from AI to escape governance burden, the governance model is the product.

The Subsidy Clock

Every enterprise AI business case is built on prices that are not market prices. OpenAI lost $5 billion on $3.7 billion in revenue in CY2024; Anthropic’s gross margins were negative 94–109%. These are capital transfer mechanisms: Microsoft invests $13B in OpenAI, which routes $8.67B back to Azure; Amazon invests $8B in Anthropic, which runs on AWS. Sequoia Capital calculates a $600B+ annual revenue gap between AI infrastructure spending and actual AI revenue. JP Morgan estimates $650B in new annual revenue needed for a 10% return. The infrastructure-to-revenue ratio is 10:1 or worse. AWS raised H200 Capacity Block prices 15% in January 2026—the first major rate increase—and Gartner projects enterprise software costs will increase substantially due to AI price pass-throughs by 2027.

The dot-com fiber buildout is the precedent. After the Telecommunications Act of 1996, telecom companies invested over $500 billion in fiber; by 2001, 95% was dark, prices collapsed 90%, and Global Crossing, WorldCom, and Lucent were destroyed. The infrastructure proved transformative eventually—but every company that built operational dependency on pre-crash pricing was wiped out. The technology was right. The business model was wrong.

This resolves in one of three ways, and enterprises lose in two of them. Prices spike as subsidies end and hyperscalers pass through amortization. Prices collapse as overcapacity drives inference to marginal cost, destroying providers. Or—most likely—prices stabilize significantly above current rates through write-downs and consolidation. Progressive determinization is the only architecture that survives all three. Compiled workflows don’t care what inference costs.

Phase Zero: Admit the Enterprise Does Not Have Processes

Part 1 made the case: most enterprise process documentation is decorative fiction. The real operating model is exceptions, arbitration, handoffs, tribal knowledge.

Agents are useful in Phase Zero precisely because they externalize this reality. They cannot improvise the way human operators do. Their failures are signal. Their traces become telemetry. The principle is capture-first, structure-later: the agent’s trace is the primary asset. Structure is derived downstream.

Phase Zero is not a technology phase. It is a governance phase. The work is to admit that the enterprise has rituals, not processes—and to decide which rituals are worth formalizing. Compiling dysfunction into code just makes dysfunction permanent.

The hard conversations nobody wants to have: Who owns this workflow end-to-end? What happens when it fails? Who decides what the data means? These are leadership problems disguised as technical ones. No amount of prompt engineering resolves the absence of accountable ownership.

Phase One: Agents as Process Archaeology

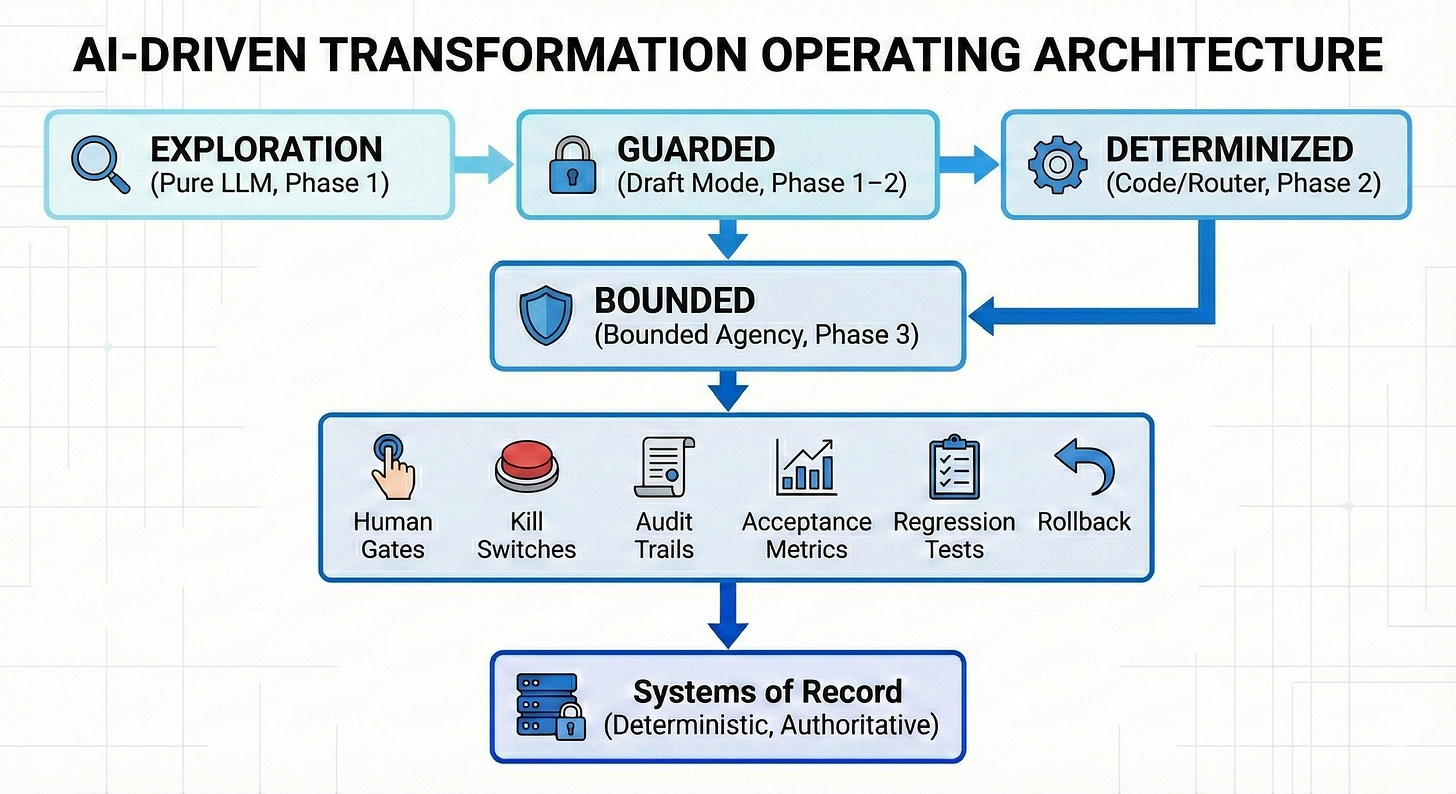

Deploy agents as exploration engines, not autonomous workers. Start with constrained execution: read-only first, guarded writes next, autonomy last.

The goal in Phase One is not “hours saved.” It is process illumination: decision paths, exception routes, escalation behaviors, data dependencies nobody documented because the documentation was never the system.

What you are buying in Phase One is not labor substitution. You are buying process archaeology.

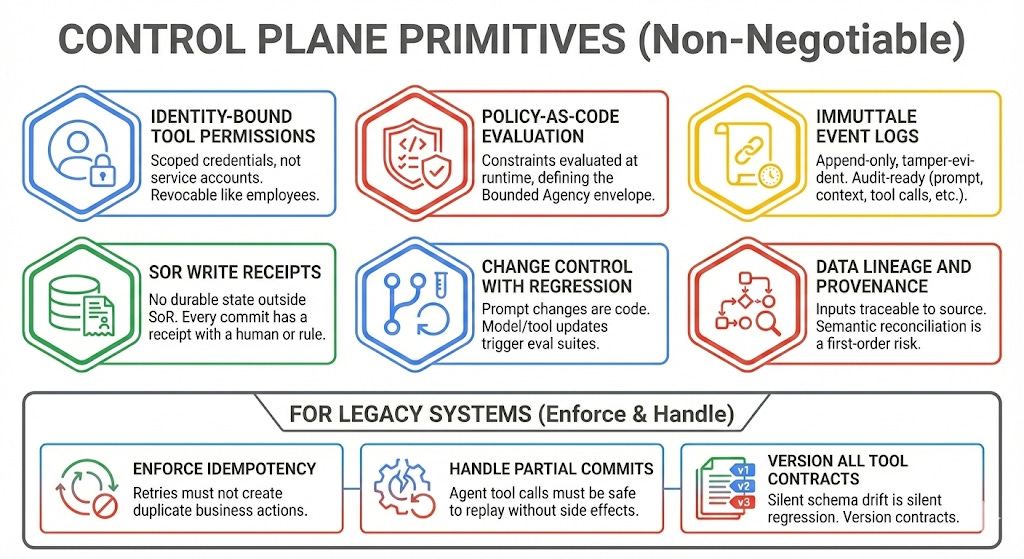

This is where Part 1’s concept of Capability Engineering becomes operational. The binary gatekeeper model collapses in an agentic environment; the answer is defining the Bounded Solution Space rather than prescribing exact paths. Security becomes choreography of constraints rather than a checklist of controls.

The control plane primitives described later in this piece are Capability Engineering in implementation—the security architecture that makes Phase One exploration safe enough to run at scale.

Phase Two: Compile the Stable Patterns

Once patterns stabilize, stop paying the AI tax for them.

Watch the acceptance rate at the human gate. If the human approves the agent’s draft 95%+ of the time for a given workflow segment, the pattern is stable. It’s a candidate for determinization.

Think of it as paving desire paths. Agents find the routes people actually walk; Phase Two is laying asphalt where the grass is worn.

Determinization means converting the stable portion into systems with predictable behavior: explicit state machines, policy-as-code gates, hardened integrations, semantic routers that dispatch known patterns to cached responses or deterministic APIs and escalate novel patterns to constrained agents or human review. The router uses probabilistic classification, but the dispatch targets are deterministic. Probabilistic surface area shrinks without requiring full code compilation.

The distinction between soft and hard determinization is not academic. Soft determinization—Constitutional AI, guardrail frameworks, prompt engineering, fine-tuning—constrains the distribution of outputs but the system remains probabilistic. “Very high reliability” is not “certain,” and in domains where residual failures translate to material harm, the difference is a lawsuit. Hard determinization eliminates output variance given identical inputs: deterministic code, SQL, rules engines, semantic routers to cached responses, explicit human decision points. The target for stable patterns is hard determinization. Soft is a waystation, not a destination.

Routing, solvers, and compilation are not competing ideologies. They are different levers for the same objective: minimizing probabilistic surface area in the liability chain.

The Horseless Carriage Caveat

The counterargument: compiling workflows into hard code reintroduces the rigidity that plagues current IT. Valid against bad compilation—against “hard-code the world.” Not valid against selective compilation of stable, high-repeatability patterns. And the brittleness critique cuts both ways: an always-agentic workflow is a moving target. Prompts drift. Providers update models. Tool semantics change. What passed eval last month can regress silently this month. “Adjust via a prompt update” is precisely the operational hazard: it makes change easy and verification hard.

Y Combinator partner Pete Koomen argues that most AI applications mimic old software paradigms rather than reimagining around AI’s strengths. For greenfield products in unregulated markets—fair point. In regulated industries, you cannot file a probabilistic audit. Even without regulators, the economics hold: deterministic execution is cheaper than probabilistic execution for known patterns, full stop.

AI Builds the Replacement

The historical objection to compiling down was cost: rewriting systems takes years and burns budgets. AI code generation collapses that objection flat. Code generation is the breakout enterprise use case—AI coding assistants now show 91% organizational adoption across 135,000+ developers as of Q4 2025. The same models that power agentic experimentation can dramatically accelerate construction of deterministic replacements.

The arbitrage most people miss: AI is most valuable not as permanent infrastructure, but as an accelerant for building infrastructure that doesn’t require AI. Use nondeterministic AI to discover and prototype. Use AI code generation to build the deterministic replacement. Graduate the workflow. The agent’s job is to make itself unnecessary for stable operations—and AI development tools make that transition faster than legacy economics ever allowed.

Phase Three: Bounded Agency for the Irreducibly Fuzzy

Some problems remain fuzzy and should stay that way: ambiguous natural language intake, synthesis across messy corpora, exception triage when policies collide, novel situations that don’t fit established patterns.

This is where agents earn their keep. But “earn their keep” does not mean “run unconstrained.”

Simon’s Bounded Rationality observed that humans are rational only within cognitive limits. In the AI era, the problem inverts. Machines have near-unlimited computational capacity but no intrinsic awareness of institutional constraints. An unbounded agent is not irrational; it is arational—optimizing brilliantly within a space that includes actions the enterprise cannot survive.

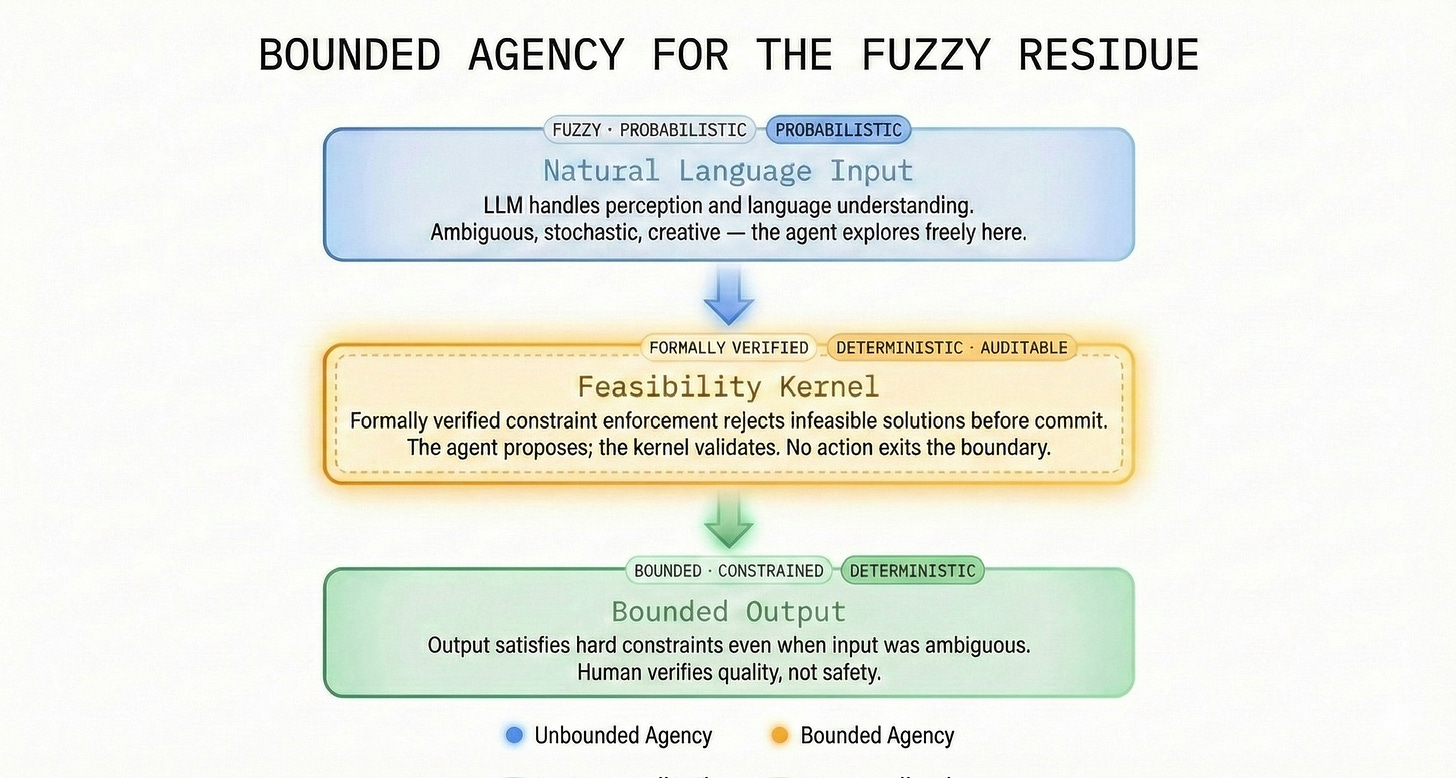

Bounded Agency is the architectural guarantee that an agent’s actions are confined to a pre-verified solution space. The agent optimizes freely within the boundary. It cannot exit the boundary.

The Feasibility Kernel

To implement Bounded Agency, build a Feasibility Kernel—a formally verified runtime monitor that enforces the boundary between what the agent may explore and what it may never propose.

The mental model is Operations Research: every optimization has a Feasible Region defined by hard constraints. The objective function cannot propose a solution outside it. In Bounded Agency, the LLM is the objective function; the constraint boundary is the Feasible Region. The agent proposes; the kernel validates before any action commits. Infeasible solutions are not mysteries—OR solved this fifty years ago.

Why minimize the surface requiring formal guarantees? Because verification is punishing. The seL4 microkernel required 200,000 lines of proof for 8,700 lines of C. Determinize stable patterns first (Phase Two). Concentrate formal verification on the irreducible residue where the stakes justify the cost.

Pure LLM systems are probabilistic end-to-end—unbounded agency. A system built on Bounded Agency is probabilistic at the edges (language understanding, creative search) and deterministic at the core (constraint enforcement, action validation).

This is already shipping. AWS Automated Reasoning Checks, generally available since August 2025, use formal mathematical proofs—not probabilistic guardrails—to validate LLM outputs against encoded business rules, claiming up to 99% verification accuracy. EY-Parthenon launched a neurosymbolic AI platform pairing language models with deterministic reasoning engines for underwriting, claims, and compliance. Elemental Cognition, founded by David Ferrucci of IBM Watson fame, built a constraint-resolution engine now used by Oneworld airline alliance. Ferrucci’s framing cuts through the noise: LLMs are not designed to perform formal computation—deterministically, efficiently, precisely, consistently following a set of rules. That is what classical algorithmic programming is for. The man who built Watson is telling you not to trust language models for deterministic work. Maybe listen.

None of these are complete formal proofs across all constraint classes. They don’t need to be. Even at 99% enforcement accuracy, the economics invert: the human verifies the cases where the boundary flags uncertainty—not the totality of output that unbounded agency demands.

Legal intake remains fuzzy—but “no response may recommend action outside the client’s jurisdiction” is enforced deterministically, not hoped for probabilistically. Customer escalation triage remains fuzzy—but “high-value customers route to senior agents” is deterministic, not emergent.

TEST CASE: GOLDMAN SACHS — “AGENTS READ THE MAIL, CODE WRITES THE CHECK”

Goldman Sachs’ co-development of Claude agents with Anthropic for trade accounting is the highest-profile test of scaffolding architecture in regulated finance. If you squint, it looks like proof that agents can be substrate. Don’t squint.

Goldman isn’t replacing the general ledger with an LLM. Per CNBC’s reporting (February 6, 2026), CIO Marco Argenti describes agents that handle the perceptual layer—messy intake (trade tickets, counterparty discrepancies, unstructured communications)—while deterministic constraints validate every proposed entry against accounting rules before commit. Six months of embedded Anthropic engineers. Targets include trade accounting, KYC, and AML. Goldman chose Anthropic specifically for “safety, interpretability, and reliability”—language that signals architectural intent, not hype adoption.

This is not “agents running the bank.” This is agents reading the mail, while code writes the check.

What remains undisclosed: override rates, regression coverage under model drift, audit artifact generation. Evidence that would validate the architecture: published acceptance metricsand incident rates post-deployment.

The “Bitter Lesson” Rebuttal

The strongest objection: Rich Sutton’s “Bitter Lesson”—reinforced by his 2024 Turing Award—argues that general methods leveraging computation ultimately dominate hand-crafted approaches. Bounded Agency looks like exactly the kind of constraint system that scaling laws will render obsolete.

The objection conflates capability with solvency. Scaling compute gives you a more powerful engine. It does not prevent the agent from going logically insolvent—proposing actions that violate constraints the model was never trained to internalize. Even a model that hallucinates 0.1% of the time produces thousands of infeasible solutions per day at enterprise scale. The Bitter Lesson tells you how to build a better optimizer. It tells you nothing about how to build a better constraint boundary.

Even Sutton now emphasizes that AI systems need “world models”—internal representations of environment constraints. And AlphaGeometry—DeepMind’s mathematical reasoning breakthrough—is a neural language model paired with a symbolic deduction engine. The Bitter Lesson’s own poster children are implementing the pattern. Scaling solves capability. Boundaries solve reliability. You need both.

Phase Four: Agents as Continuous Architecture Auditors

Most implementations treat Phase Four as an afterthought—six lines in the deck, a monitoring dashboard nobody checks. This is exactly backward. Phase Four is where the lifecycle loops. Without it, progressive determinization is a one-shot installation project. With it, the enterprise becomes a self-improving system.

Agents should watch the enterprise more than they run it. Deploy them to continuously surface: process variance and exception hotspots, control breakdowns and repeated failure modes, data quality bottlenecks, policy drift and incoherent decisioning, divergence between documented process and actual behavior. Each discovery feeds the next cycle: new candidates for Phase Two determinization, new constraint definitions for Phase Three Bounded Agency, new evidence that a determinized workflow has drifted and needs re-examination.

The Probabilistic Middleware Trap

Here is the failure mode nobody is talking about. The emerging pattern—semantic layers, agentic orchestration platforms, shared context stores—creates probabilistic infrastructure between agents and systems of record. If agents can write to this layer without committing those writes to underlying systems of record, the organization develops a probabilistic layer of “truth” that drifts from actual truth. Agents read and amplify each other’s inferences. Synthetic unverified facts circulate. A hallucination loop detaches from reality and nobody notices because the loop is self-reinforcing.

Phase Four monitoring must catch this before it metastasizes. The reasons context graphs fail this test have been explored at length: the ontology bottleneck didn’t disappear (it got renamed), time breaks naive graphs, and provenance is not optional. Semantic layers must be read-through caches and orchestration scaffolding, never primary stores of persistent state. The unprocessed trace log—not the derived graph—is the durable artifact. All writes must commit to underlying deterministic systems of record via validated gates.

Data Quality: The Prerequisite Nobody Mentions

Bounded Agency assumes constraint definitions are sound and inputs are well-structured enough for the deterministic solver to reason over. In practice, this is where progressive determinization gets ugly: semantic reconciliation across systems, entity resolution across legacy boundaries, temporal consistency when data arrives at different cadences from different sources.

Here is the uncomfortable part: the data quality problem is often the reason workflows haven’t been formalized in the first place. The human operator navigates ambiguous data through institutional memory. The agent cannot. Progressive determinization forces the enterprise to confront data quality problems it has been working around for decades. Phase Four is where those problems become visible—and where the enterprise decides whether to fix them or keep paying humans to route around them.

TEST CASE: HARVEY — THE MARKOV TAX IN AI-NATIVE SCALING

Harvey, the legal AI company, hit roughly $195 million in ARR by end of 2025, serving 50 of the top AmLaw 100 US law firms at an $8 billion valuation. If any company proves that probabilistic infrastructure can scale, Harvey appears to be the case.

Look closer. Harvey employs former practicing lawyers across customer success and verification roles—domain experts who ensure the AI’s outputs meet professional standards. A 2024 Stanford study found specialized legal LLMs produce infeasible outputs 17–33% of the time. Harvey’s economics work because it is an advisory tool where the human lawyer retains decision authority—exactly the Phase One / Phase Two pattern progressive determinization prescribes.

Even the AI-native success story proves the Markov Tax: verification labor scales with adoption. Harvey didn’t repeal verification. They priced it into the product. And they monitor continuously—which workflows are stabilizing, which need more guardrails, which need more lawyers. That is Phase Four in action, whether they call it that or not.

The terminal purpose of the agent is to make itself replaceable for any given workflow. Phase Four is where you measure whether that’s happening — or whether the enterprise is building permanent dependency on probabilistic infrastructure with no exit ramp.

The Operating Architecture

The four phases describe what to build. What follows is how to run it — the enforcement layer that prevents progressive determinization from becoming another planning artifact that dies in committee.

Three governance preconditions are non-negotiable. Every agent has an accountable owner — a person, not a team — with authority and responsibility. Every agent workflow has a cost model that includes compute, governance, remediation, and tail risk, not just “hours saved.” And every agentic deployment has a defined exit: a path to determinization, a justified case for Bounded Agency, or retirement. Unbounded probabilistic decisioning in the liability chain is not a valid end state.

The implementation specifics behind these principles are Capability Engineering reduced to enforcement mechanisms (control-plane primitives).

Without these primitives, “governance” is aspiration, not architecture.

Every agent deployment must have a hard-coded kill switch — the ability to revert the workflow to human-only or deterministic-only state immediately. Not gracefully. Immediately.

The most serious trigger class: constraint escape, where agent output commits to a system of record despite violating a boundary constraint. This is privilege escalation in a microkernel. For what happens when control plane primitives are absent entirely, see BodySnatcher — where a hardcoded platform-wide auth secret let an unauthenticated attacker weaponize ServiceNow’s own agent to provision admin credentials. The kill switch prevents the “too big to fail” problem where an organization becomes so dependent on the agentic swarm that it cannot shut it down without ceasing operations. Independence from any single provider is part of the requirement: the ability to revert to human-only operation, not merely to swap vendors.

Who Owns This

Progressive determinization demands a cross-functional capacity that most org charts pretend doesn’t need to exist.

Who monitors acceptance rates at human gates? Who decides when to trigger determinization? Who defines the constraints that constitute the Bounded Agency boundary — and who validates that those constraints are complete? The role sits at the intersection of security architecture, process engineering, ML operations, and risk management. It is closest to what a cybersecurity leader does when operating well: managing the boundary between trusted and untrusted systems, defining constraint envelopes, and intervening at the policy level rather than the transaction level. As argued elsewhere, AI security is not a new tower but a forced merger — MRM sets the law, cyber provides the enforcement.

The organizational prerequisite is a named accountable person who owns the lifecycle end-to-end. Without this, the lifecycle devolves into committee governance, and committee governance is where transformation goes to be discussed until it’s irrelevant.

FOR AI PLATFORM AND PRODUCT LEADERS

If your enterprise deals are stuck in pilot purgatory, progressive determinization explains what your customers need.

The product is not the agent. The product is the lifecycle — the tooling that moves customers from exploration to determinization to Bounded Agency with metrics at every gate.

Price for outcomes, not tokens. Your customer’s cost driver is governance, not inference. Ship evals, regression coverage, rollback, and audit evidence — not autonomy.

Ship constraint enforcement as a platform feature. The fastest path to enterprise procurement: demonstrating that your agent cannot propose non-compliant actions — not that it usually doesn’t. The governance layer is where the margin lives.

Build the off-ramp into the product. Your most successful customers will graduate from your agentic product for stable workflows. The vendor who enables determinization becomes the exploration engine for the next set of workflows.

Salesforce SVP Sanjna Parulekar: “Language models are exceptional at understanding intent and context but they are, by design, probabilistic. They generate likely outcomes, not guaranteed ones.” The customer who demands Bounded Agency is not being difficult. They are being rational.

The Strategic Imperative

The fallacy of enterprise AI is not that AI cannot create value. The fallacy is treating agents as permanent infrastructure rather than scaffolding for transformation.

Deflation makes agents cheaper. Governance makes agents expensive. The enterprise wins by determinizing what can be determinized, bounding what cannot, and keeping unbounded probabilistic systems where they belong: in exploration, not production.

The companies that win will not be the ones that deploy the most agents. They will be the ones that deploy agents strategically — as instruments of discovery that feed determinization and constraint, not as permanent, ungovernable substitutes for process discipline.

Frame it right, and AI becomes the most powerful tool for enterprise transformation since the relational database. Frame it wrong, and you’re building on sand — subsidized sand today, expensive sand tomorrow, and the collapse happens exactly when you can least afford it.

Scaffolding builds. Substrate breaks.