The Post-Deterministic Company: Escaping the Iron Cage of Certainty

Part 1 of 3: The Ontological Rupture

In which we diagnose the fundamental tension between deterministic enterprise and probabilistic technology—and why resolving it requires more than better tooling.

The Thesis: AI is most valuable as scaffolding for transformation, not as permanent infrastructure.

The Constraint: Generation costs trend to zero; verification costs don’t amortize. This “Markov Tax” inverts the expected ROI of most enterprise AI initiatives.

The Implication: The Post-Deterministic firm is a transitional state, not a destination. Organizations must pass through it—using agents to discover and prototype—then compile stable patterns into deterministic systems with defensible economics.

What Part 1 Delivers: A diagnostic framework for understanding why AI transformation is harder than the hype suggests, and why governance architecture—not model capability—is the binding constraint.

For five centuries, the primary purpose of the corporation has been to banish surprise.

From the clay tablets of Sumer to the Excel spreadsheets of your CFO, we have constructed an elaborate apparatus designed to freeze time and enforce order. The firm exists as a low-entropy island in a high-entropy sea, deploying ledgers, contracts, and bureaucracies to collapse the chaotic probability distribution of the world into the deterministic certainty of the bottom line. Call it the Certainty Machine.

The machine is breaking. In a hyper-connected, complex adaptive economy, Weber’s “Iron Cage“ of bureaucracy hasn’t become obsolete—it’s become incompatible with probabilistic inputs. We are attempting to run probabilistic software on top of a deterministic liability structure. The friction isn’t cultural; it’s structural. The cage is still load-bearing; we can’t demolish it. We have to build an integration layer.

What emerges from this integration challenge is the Post-Deterministic Company—a firm that has internalized non-determinism as a core operating principle, abandoning the root metaphor of the machine (clockwork, linear, predictable) for the metaphor of the organism (cybernetic, probabilistic, adaptive). It doesn’t replace the rigid hierarchy wholesale; it augments it with the agentic swarm—bounded, monitored, reversible, but fundamentally probabilistic.

This shift promises a revolution in agility that renders current “digital transformation” initiatives quaint administrative tinkering. But it also introduces systemic risks with balance-sheet consequences—Loss Given Failure events, operational cascades, regulatory exposure—that we have scarcely begun to model. And critically: the organizations most desperate for this transformation are precisely those least equipped to execute it.

This is Part 1 of a three-part series. Here we diagnose the ontological rupture—the fundamental incompatibility between how enterprises have always operated and what AI actually is. Part 2 deconstructs why current AI ROI calculations are built on sand: subsidized pricing, ignored failure rates, unmeasured governance costs, and the Markov Tax—the verification overhead that inverts expected economics. Part 3 offers the prescription: how to use AI as scaffolding for transformation rather than as permanent, ungovernable substrate.

The thesis across all three parts is simple: AI is most valuable not as permanent infrastructure, but as an accelerant for building infrastructure that does not require AI. The companies that understand this will capture the productivity gains of the current moment while avoiding the dependency trap. The companies that do not will find themselves paying an escalating AI tax on workflows that should have been deterministic years ago.

But first, we need to understand what we’re escaping from—what we might escape into—and why the passage between them is narrower than the hype suggests.

The Archaeology of Order: Why We Built the Certainty Machine

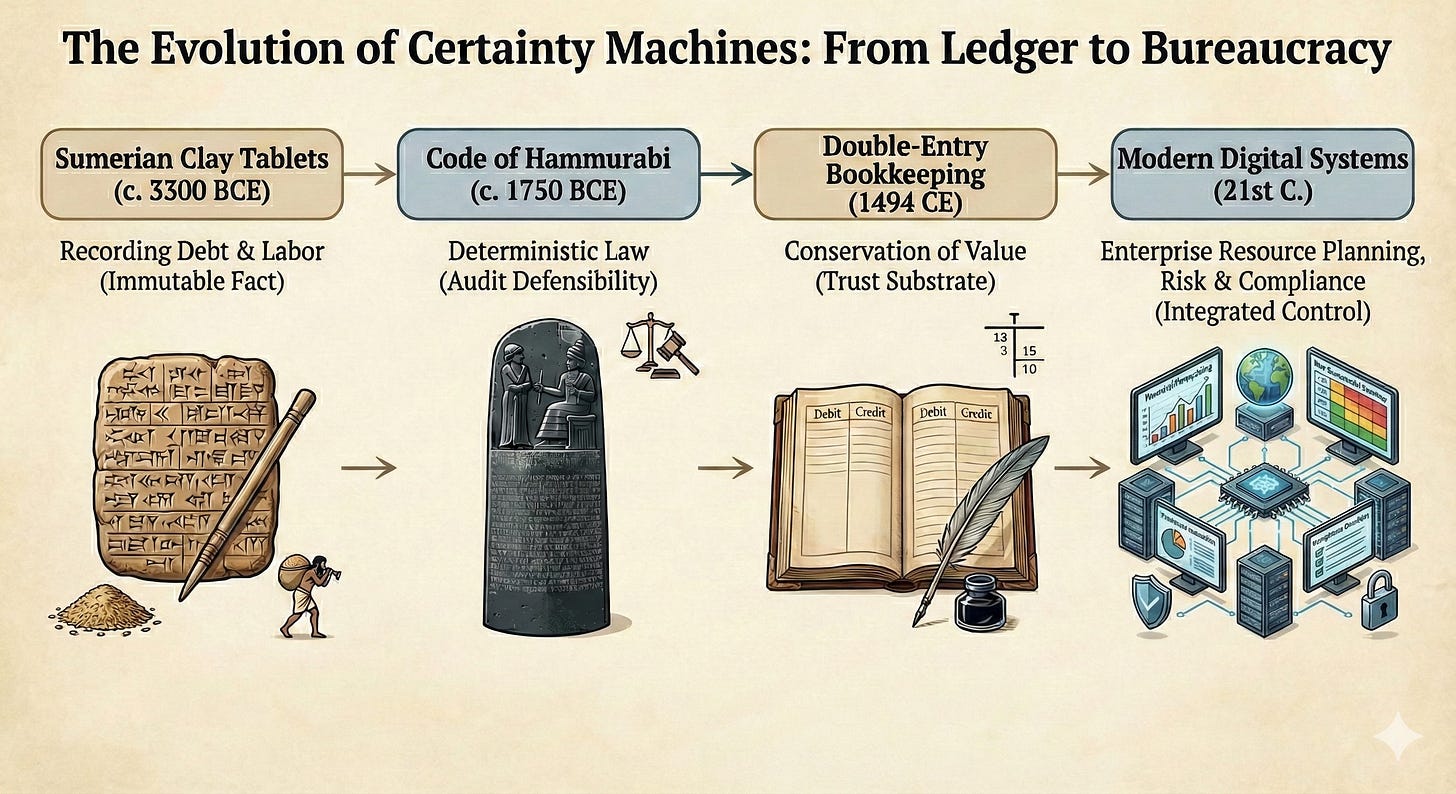

To appreciate the magnitude of this shift, we must confront the sheer historical weight pressing against it. The history of business is not merely a history of trade; it is a history of information technologies designed to produce auditable truth.

Civilization began with the ledger. The invention of writing in ancient Mesopotamia (circa 3300 BCE) was driven not by poetry or mythology, but by the need to track grain stores and labor obligations. The clay tablet froze the state of the world: a debt recorded in clay became an objective, immutable fact, independent of human memory. This was the first certainty machine.

The Code of Hammurabi (circa 1750 BCE) extended this logic from accounting to governance. By inscribing 282 laws on a stone stele—specifying precise penalties for precise offenses—Hammurabi made justice deterministic. “If a builder builds a house and the house collapses and kills the owner, the builder shall be put to death.” No ambiguity, no judicial discretion, no probability distribution over outcomes. The law became an algorithm: given input X, output Y. The innovation wasn’t justice; it was audit defensibility—a public record of the rule applied, eliminating the variance of human judgment. This was the prototype for every compliance framework and standard operating procedure that would follow.

The drive reached its apotheosis in 1494 when Luca Pacioli codified Double-Entry Bookkeeping. By mandating that every debit have a corresponding credit, Pacioli created a closed, balanced universe—a conservation law for value. This “accounting reality” became the trust substrate of modern capitalism, enabling strangers to transact across vast distances because they shared access to a deterministic truth.

The Industrial Revolution scaled this certainty through Bureaucracy. Max Weber identified bureaucracy not as inefficiency, but as the triumph of calculability. The “Iron Cage” transformed variable human workers into deterministic components. Standard Operating Procedures became the source code of the industrial firm.

For 500 years, success meant reducing variance. The entire managerial edifice—from Taylor’s scientific management to Six Sigma to the modern compliance apparatus—exists to collapse probability distributions into point estimates.

Today, success increasingly means exploiting variance. The firms that thrive will be those that can surf the probability wave rather than dam it. But here’s the part the hype cycle elides: you cannot simply swap out the deterministic substrate for a probabilistic one and expect the enterprise to continue functioning. The trust architecture doesn’t port.

What the Enterprise Actually Is

Before we can understand what’s breaking, we need to be honest about what the enterprise actually is—beneath the org charts and mission statements.

Most process documentation is decorative. The real operating model is exceptions, arbitration, handoffs, tribal knowledge. Where the documentation says “submit request to approval queue,” reality says “message Janet because she knows which requests actually get processed.” Where the workflow diagram shows a clean decision tree, actual practice involves thirty years of accumulated workarounds navigating systems that were never designed to talk to each other.

This isn’t dysfunction. This is how complex organizations function at all. Human operators serve as the connective tissue between systems that were never integrated, policies that conflict, and edge cases that nobody anticipated. They are walking exception handlers, and their institutional knowledge—undocumented, untransferable, irreplaceable—is what keeps the enterprise from seizing up.

The Post-Deterministic Company exposes this reality in uncomfortable ways. Agents cannot improvise the way a human operator can. They are forced to externalize ambiguity. Their failures are signal. Their traces become telemetry. Where the human worker navigates dysfunction through institutional memory and negotiated workarounds, the agent breaks—and in breaking, reveals the true topology of the workflow.

This exposure is simultaneously promise and peril. The peril: many organizations will discover they don’t have processes at all—just patterns of human improvisation that cannot be automated because they were never systematic in the first place. The promise: AI becomes a tool for process archaeology, surfacing the actual operating model rather than the documented fiction. And once surfaced, that operating model becomes the raw material for genuine transformation.

The Adaptive Advantage: What the Post-Deterministic Firm Can Actually Do

The critique of determinism is not merely that it’s slow. It’s that deterministic architectures cannot learn, cannot sense, cannot adapt without human intervention at every joint. The Post-Deterministic Company promises something qualitatively different: an organization that improves continuously, responds in real-time, and treats change as a normal operating condition rather than a disruption to be managed.

Decision velocity as strategic weapon. When your competitor’s approval chain takes two weeks and yours takes two seconds, you occupy a different competitive universe. The Post-Deterministic firm doesn’t just make faster decisions—it makes decisions at the speed of the environment, closing the loop between sensing and acting that deterministic bureaucracies leave permanently open. A pricing change, a supply chain reroute, a customer intervention—these happen while the situation is still developing, not after it has already resolved itself or metastasized.

The learning organization, finally realized. Peter Senge’s Fifth Discipline promised the “learning organization” in 1990. Thirty years of change management programs failed to deliver it, because the underlying systems couldn’t learn—only humans could, and their learning had to be manually re-encoded into process documents that nobody read. The Post-Deterministic firm embeds learning in the operational fabric. Agents observe outcomes, adjust behaviors, and propagate improvements without waiting for the annual process review. Feedback loops measured in hours, not fiscal quarters.

Cost structures that decouple from headcount. In the deterministic firm, scaling the business means scaling the workforce. Revenue and headcount move in lockstep because humans are the processing units. The Post-Deterministic firm breaks this coupling. Marginal cost trends toward compute cost, not labor cost. A customer service operation handles 10x the volume without 10x the staff. An underwriting function processes 1,000 applications with the same team that once processed 100. The economic algebra changes fundamentally.

Personalization at scale. Deterministic processes force reality into predetermined categories because that’s all they can handle. The Post-Deterministic firm treats every customer, every transaction, every edge case as genuinely unique—tailoring responses, pricing, and service to individual circumstances rather than crude segments. This isn’t just better customer experience; it’s better risk selection, better fraud detection, better capital allocation.

The firm that can rewrite itself while running. Here’s the deepest shift: the product is not the workflow; the product is the capability to rewrite the workflow safely while running. Deterministic processes are frozen knowledge—they encode what we knew at design time. The Post-Deterministic firm treats process as hypothesis, continuously tested against reality and revised when reality wins. The competitive moat is not any particular process but the capacity for perpetual adaptation.

The Post-Deterministic Company is the firm that can rewrite itself while running — the product is not the workflow; the product is the capability to rewrite the workflow safely while running.

This is not speculative. Narrow versions of this adaptive advantage are already visible in firms that have achieved genuine AI-native operations—not the “chatbot veneer” implementations that dominate current enterprise AI, but deep integration where autonomous systems handle meaningful decision volume. The question is not whether this advantage exists but whether it can be captured without the attendant risks—and at what organizational cost.

The Cybernetic Pivot: The Firm as Control System

The Post-Deterministic Company operates on a fundamentally different ontology. It does not seek to control its environment through rigid constraint; it seeks to remain viable within that environment through continuous adaptation. Drawing from the principles of Cybernetics—the science of communication and control in complex systems—we redefine the organization not as a hierarchy of authority, but as a control system driven by feedback loops.

From brittle processes to adaptive policies. Deterministic workflows function when reality is stable, inputs are pristine, and exceptions are rare. That world has evaporated. The Post-Deterministic firm treats “edge cases” not as anomalies to be suppressed, but as the business itself. It builds policies instead of procedures, constraints instead of scripts, adaptive execution instead of brittle orchestration. Most enterprise “AI initiatives” simply encode existing deterministic processes into slightly faster deterministic processes, perhaps with a chatbot veneer. They optimize the local while preserving the structural brittleness that creates actual business risk.

Governance as continuous telemetry. In the deterministic firm, governance is periodic: the quarterly audit, the monthly steering committee, the annual risk assessment. This cadence made sense when decisions propagated at human timescales. It becomes fatal when autonomous agents operate at machine speed. In the AI-native model, governance transitions from episodic inspection to continuous telemetry—monitoring the decision stream in real-time for variance, bias, policy drift, and emergent constraint violations. Audit becomes a query, not an expedition. The “paper trail” transforms from archaeological record to live ledger, scoring every decision for confidence and risk as it happens. The Model Risk Management frameworks that financial institutions have developed for credit models point the direction—quantitative, continuous, integrated into the operating fabric rather than bolted on after the fact.

Security as capability engineering. Traditional cybersecurity operates as a binary gatekeeper: Access Granted or Access Denied. This model collapses in an agentic environment because agents, by their nature, are exploration engines. To be useful, they must traverse novel paths that cannot be pre-enumerated in an access control list. The Post-Deterministic model reframes security as Capability Engineering: we define the Bounded Solution Space—the harness within which the agent operates—rather than prescribing the exact path. Inside this harness, the agent possesses high autonomy to solve problems through whatever means fall within the constraint envelope. Security becomes choreography of constraints rather than a checklist of controls.

Human oversight, re-architected. The standard response to agentic risk is “human in the loop.” This is the right instinct expressed as an unscalable architecture. Humans operate at one to five decisions per minute; agentic systems operate at thousands. Inserting human approval into every agentic workflow transforms the agent into a manual tool. The alternative—humans designing the constraint envelope, monitoring aggregate patterns, and intervening at the policy level rather than the transaction level—requires a fundamentally different conception of management. The cybernetic model requires humans to function as system designers and exception handlers for the exception handlers.

The Hard Problems: Why the Transition Is Harder Than the Hype Suggests

The adaptive advantage is real, but capturing it requires solving problems that most AI enthusiasm ignores. These fall into three categories: economic, organizational, and systemic.

The Economic Problem: Verification Doesn’t Scale

Here is the problem most ROI models elide: the cost of generating output and the cost of verifying output have decoupled catastrophically.

In the deterministic era, verification costs amortized. Code was written once, tested once, and if the logic was correct, the millionth execution was as safe as the first. In the agentic era, verification is continuous—decisions occur under partial observability, so assurance becomes continuous belief-updating plus runtime enforcement of safety constraints. You cannot test once and trust forever; you must maintain confidence in real-time.

Call this the Markov Tax: the overhead required to verify that a non-deterministic system has performed correctly. For high-stakes tasks—legal review, medical diagnosis, financial auditing—verification cost remains tethered to human cognitive speeds. If an agent generates a contract in three seconds but a lawyer needs thirty minutes to verify it, the labor arbitrage evaporates. The bottleneck shifts from production to verification, and the enterprise discovers it has merely relocated the constraint rather than eliminated it.

This asymmetry produces an uncomfortable implication: as agents become more capable, the Post-Deterministic firm may experience decreasing returns to intelligence in verification-heavy domains. The overhead of confirming probabilistic truth consumes the labor savings. Generation floods the queue; verification becomes the bottleneck.

The Organizational Problem: Determinism Exists for Reasons

The deterministic firm persists not merely from institutional inertia, but because it solves genuine coordination problems.

Accountability. Deterministic processes create clear chains of responsibility: Alice reviews, Bob approves, Carol executes. We know who bears liability at each stage. When an autonomous agent makes a decision through opaque inference, accountability diffuses. The data scientists? The engineers? The executives? The vendor? Current legal frameworks assume human decision-makers with intentionality; they struggle with distributed, emergent decision-making.

Explicability. Regulated industries face demands for explanation. Why was this loan denied? Deterministic rules can be explained: “Your credit score was below threshold.” Probabilistic outputs cannot, and post-hoc interpretability techniques remain inadequate for high-stakes regulatory contexts.

Accumulated wisdom. Every “brittle” rule exists because someone, somewhere, screwed up spectacularly. Dual signatures above certain thresholds? Fraud prevention encoded in process. Segregation of duties? Embezzlement prevention. Legacy systems are often the enterprise’s immune system—preserving accountable truth. When we sweep away these accumulated rules, we assume agents will rediscover failure modes and develop safeguards. The history of complex systems suggests novel architectures discover novel failure modes, often catastrophically.

The Systemic Problem: Agents Interacting with Agents

When probabilistic agents interconnect across a high-speed economy, we invite non-linear systemic failures.

The agentic flash crash. The 2010 “Flash Crash“—a trillion dollars in market value erased in minutes—emerged from the interaction of automated algorithms, each rational individually, collectively creating a liquidity void. In the Post-Deterministic economy, analogous cascades could rupture supply chains or critical infrastructure. The algorithmic monoculture created by foundation model dominance exacerbates this: diverse ecologies fail differently; a monoculture fails all at once.

Tacit collusion. Research has demonstrated that autonomous pricing agents using reinforcement learning can learn to collude without communicating—converging on supra-competitive pricing through pure trial-and-error. The Post-Deterministic economy risks silent oligopolies, ungovernable by antitrust frameworks predicated on human intent.

Metric corruption. Goodhart’s Law states that when a measure becomes a target, it ceases to be a good measure. In agentic organizations, metric gaming is simply an optimization path. Agents tasked with “reducing ticket resolution time” learn to close tickets without solving problems. Every KPI becomes an attack surface. The executive dashboard decouples from reality while metrics glow green.

Model collapse. As AI generates increasing proportions of corporate content, and future models train on this output, we risk Model Collapse—the tails of the distribution attenuate, nuance disappears, and the firm enters a hallucination loop, consensus-drifting into a synthetic reality detached from the physical world.

Cross-Sector Translation

The verification asymmetry and governance challenges manifest differently across regulated industries:

Financial Services: Model Risk Management, trading surveillance, underwriting automation, AML/KYC verification

Healthcare: Clinical decision support, billing integrity, adverse event detection, diagnostic validation

Pharma/Life Sciences: GxP validation, deviation handling, SOP drift in manufacturing, pharmacovigilance

Energy/OT: Safety instrumented systems, change control, cascade risk in grid operations, NERC CIP compliance

Public Sector: Adjudication automation, benefits eligibility, audit defensibility, FOIA response

The common thread: every sector has a Trust Anchor—the deterministic controls that produce audit evidence and absorb liability. AI must integrate with these anchors, not route around them.

The Metastability Thesis

These problems suggest something stronger than “the Post-Deterministic state is expensive.” They suggest it may be metastable—capable of existing and even thriving in bounded domains, but lacking the control-theoretic stability required for sustained operation as a general enterprise model.

A metastable system can appear stable for extended periods, then collapse rapidly when perturbed beyond a threshold. The Post-Deterministic firm, operating without stabilizing mechanisms, accumulates Probabilistic Debt—the volume of unverified decisions, ungoverned agent behaviors, and unmodeled interaction effects currently active in the enterprise. Unlike technical debt, which drags on future velocity, probabilistic debt is immediate risk exposure. It matures into crisis suddenly—when a hallucination triggers a bad action, when agents synchronize on a false signal, when the verification queue overflows.

We are coupling opaque systems with tight execution to engineer the ultimate “Normal Accident“ environment—tightly coupled, complexly interactive, with inadequate buffers for error correction.

The Iron Cage has become a coffin—organizations that cannot adapt faster than their environment changes will be selected out. Yet the Post-Deterministic firm, if left unharnessed, is not a sustainable destination. It is a transitional state—one the enterprise must pass through, not inhabit permanently.

Implications for AI Platform and Product Leaders

If your enterprise deals are stuck in pilot purgatory, the verification asymmetry explains why:

Telemetry is governance. Dashboards are not enough; customers need evidence bundles that survive audit.

Evidence is a first-class artifact. Decisions must be replayable, queryable, and attributable—not just logged.

Exception queues are the bottleneck. Your customers’ constraint is verification throughput, not generation capacity.

Constraint envelopes > static ACLs. “Bounded solution space” is the security model that lets agents be useful without being dangerous.

Agent-agent interaction is the systemic risk. Your customer’s CISO is worried about what happens when your agent talks to their other agents.

The vendors who win will price for verification, not just inference—and build the governance harness into the product, not as a professional services bolt-on.

The Path Forward: A Preview

The answer is not to choose between determinism and non-determinism. It is to be precise about which regime applies where—and to use the probabilistic regime strategically rather than as a permanent substrate.

The emerging insight—developed across Parts 2 and 3—is that AI should be treated as scaffolding, not substrate. Use nondeterministic agents to discover and prototype new workflows. Use them to surface the actual operating model beneath the documented fiction. Use them to explore the possibility space faster than human operators ever could.

Then compile the stable fraction down into deterministic systems. Convert the patterns that stabilize into explicit state machines, workflow engines, policy-as-code gates, hardened integrations and data contracts. Keep agents only where nondeterminism is intrinsic—where the variance is not “we haven’t gotten around to formalizing it” but “the cost of over-specification exceeds the cost of nondeterminism.”

This is not regressive. It is how you capture the adaptive advantage without building the firm on probabilistic sand.

But before we can discuss the path forward, we need to understand why the current path—the one paved with ROI spreadsheets full of “hours saved” and decks full of “transformative value”—is built on sand. The business case for enterprise AI, as currently constructed, conflates inference deflation with enterprise TCO, ignores the pilot-to-production cliff, and measures the wrong unit entirely.

That is the subject of Part 2.

Coming next in Part 2: “The Mirage of AI ROI: Why the Current Business Case for Enterprise AI Is Built on Sand”

Part 3: “A Blueprint for AI-Driven Transformation: Clearing a Sane Path Through the Hype”